Warfare spurred on the welfare state in the 20th century

...but it probably won’t in future.

The link between warfare and welfare is counter-intuitive. One is about violence and destruction, the other about altruism, support and care. Even the term “welfare state” – at least in the English-speaking world – was popularised as a progressive and democratic alternative to the Nazi “warfare state” during World War II.

And yet, as new research shows, the link goes far beyond rhetoric. Across the industrialised world, mass war spurred the development of the welfare state in the 20th century.

Left-wing champions of the welfare state have long pointed to the so-called “guns versus butter” trade-off as a way to argue the exact opposite. The trade-off suggests a negative relationship between changes in military spending and social spending. Put differently, armament and warfare should lead to welfare state stagnation or even cutbacks, not growth.

The origin of the phrase is usually attributed to Nazi leader Hermann Göring, who never used it, but nonetheless repeatedly played on the theme. In a speech in 1935 he declared: “Ore has always made an empire strong, butter and lard have made a country fat at most.” In any case, the idea of guns and butter stuck.

Adolf Hitler and Hermann Göring in 1938. (Photo: German Federal Archive via Wikimedia, CC BY-SA)

Both guns and butter

Yet there is surprisingly little evidence of a strong guns versus butter trade-off in government spending of Western countries during the Cold War and after. Granted, just before or during a war, funds tend to flow towards the military. Yet in the long run, higher defence spending doesn’t generally lead to lower spending on pensions, unemployment or healthcare. Instead, the massive surge of public spending in the middle of the 20th century often left space for both guns and butter.

As a group of historians and political scientists shows in Warfare and Welfare, a book I recently co-edited, a whole range of mechanisms causally link mass warfare and welfare state development, almost always producing a positive and sizeable effect.

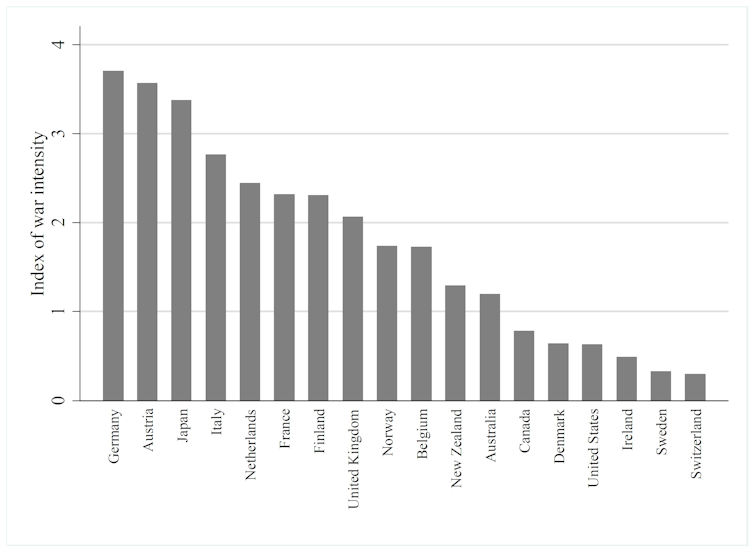

In a statistical analysis, Herbert Obinger and Carina Schmitt measured the “intensity” of World War II across countries – based on information on duration, casualties, economic gains or losses and whether war was fought on home territory or not. They found that, controlling for various other influences, an increase by one unit on the intensity index – or hypothetically moving, say, from Norway to Italy on the graph below – lifted the social spending to GDP ratio by 1.14 percentage points. While this sounds like a small effect, the average social spending level of these countries was 8.5% of GDP in the early 1950s. Over time, the effect disappeared, but only about 25 years after the end of the war. Social spending kept growing, but for other reasons.

Index of war intensity. (Graph: Obinger et al. (2018) in Obinger/Petersen/Starke (eds.): Warfare and Welfare, OUP.)

Several countries have introduced new welfare schemes during wartime. Take Japan, where the Pacific War of 1937 to 1945 was “the most innovative period in the development of welfare policy”, according to political scientist Gregory Kasza. War powerfully changed elites’ views on state intervention, even in a late industrialising country without a significant labour movement like Japan. The Ministry of Health and Welfare was set up in 1938 after intense lobbying by the military. A national health insurance scheme quickly followed, as well as public pensions and unemployment relief.

Other wartime innovations have included the design of a social insurance system in Belgium in 1944 (the “Social Pact”) and the start of federal involvement in social policy in Australia. There was also an expansion and modernisation of poor relief in countries including France and Germany during World War I, when not only the poor, but large parts of the middle class, suddenly depended on support for survival.

Pre and post-war spending

Warfare has shaped welfare not only during periods of combat – preparation for war and military rivalry also had an impact. Concerns among the military leadership about the fitness of military recruits, for example, inspired early labour protection and social insurance legislation in 19th-century Austria.

Numerous welfare programmes have also swung into action to deal with the legacy of wars. The burden of caring for 1.5m disabled ex-servicemen, half a million war widows and almost 2m orphans made the Weimar Republic effectively a veterans’ welfare state. As a result, as much as 20% of the young republic’s budget was spent on veterans in the form of pensions, as well as modern rehabilitation schemes that paved the way for today’s policies for the disabled.

A disabled German war veteran in Berlin in 1923. (Photo: German Federal Archive via Wikimedia, CC BY-SA)

The British example is an interesting one. Unlike in many other countries, warfare and welfare are in fact tightly connected in public memory. The welfare state is closely linked to the “people’s war” of World War II in British memory – as in the NHS bit of the London Olympics opening ceremony in 2012.

Yet historian David Edgerton has joined others in arguing that this founding myth of the British welfare state – that it was essentially a wartime invention, laid down in the 1942 Beveridge Report and made possible by strong cross-class solidarity forged during the Blitz – is largely that: a myth. Rather than being created from scratch by Beveridge and implemented by the prime minister, Clement Attlee in 1948, National Insurance built on important pre-war foundations. World War I, not II, was the key stimulus for welfare state expansion in the 1920s. But the main element added in the 1940s was health services.

Concessions on the home front

Not only did the destruction and human suffering during war in the 14 countries my colleagues and I studied create “demand” for services and transfers, but there was often also a political dimension to it. Democratisation was far from fully achieved in many countries going into World War I. The need to keep the home front quiet forced even authoritarian governments like Germany and Austria to make concessions, for example, by acknowledging trade unions. This paved the way for post-war innovations such as unemployment insurance, which quickly spread in the interwar period so that, by 1940, one form of unemployment benefit was in place in virtually all Western countries. Before 1914, this had been inconceivable.

On the “supply” side, war has tended to increase state capacities in the form of taxation, creating a vastly enhanced state apparatus and the centralisation of power. As guns fall silent, these legacies of war have been used for peaceful ends, which helps to better understand the phenomenal rise of the welfare state after the war. By writing this, I’m in no way implying that warfare should be seen in a more positive light. The (mostly unintended) effects on welfare state development cannot outweigh the profound human suffering brought about by the two world wars, killing an estimated 80m people.

Today, we are not seeing such big repercussions from warfare on welfare. It’s not that rich countries are less involved in wars. It’s the way in which they fight that matters. Mass armies disappeared and were replaced by all-volunteer forces almost everywhere. Sweden, however, recently decided to reintroduce conscription. It remains to be seen whether other countries will follow.

Technological change, from nuclear weapons to cruise missiles and drones, has reduced the need for large armies. And voters have become unwilling to accept human losses in wars often fought far away from home.

Israel and to a lesser extent the US are the exceptions here. As analysts Michael Shalev and John Gal show in our book, the threat of war and the militarisation of society via gender-neutral conscription and reserve duties have a massive effect on the shape of the Israeli welfare state. More widely, in both Israel and the US, veterans and their families receive increasingly accessible, generous and universal benefits, leading to inequalities between the welfare provision for veterans and civilians.

For the most part, however, contemporary warfare is unlikely to influence welfare in the way it did in the past.![]()

---------------

This article was originally published on The Conversation under a Creative Commons license. Read the original article.